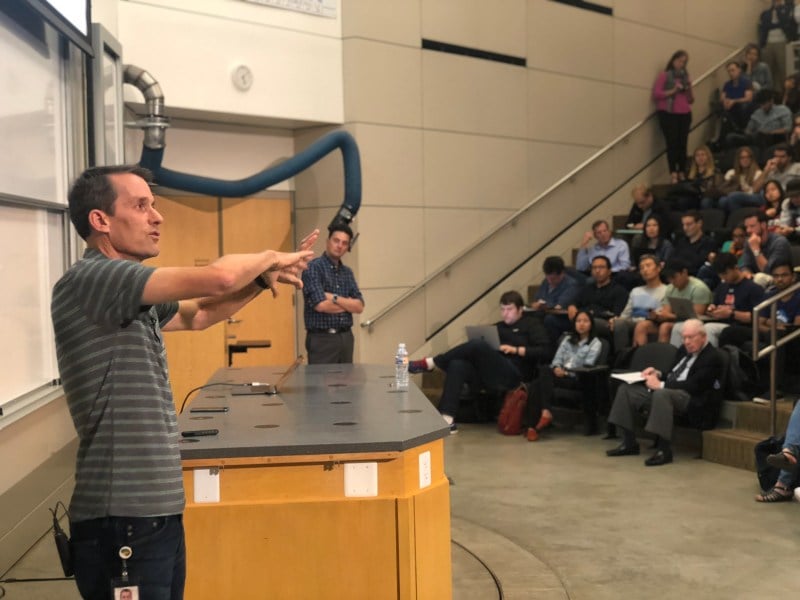

Google Senior Fellow and Head of Artificial Intelligence (AI) Jeff Dean discussed the future of AI Monday in Hewlett Teaching Center, describing Google’s efforts to push the boundaries of what computers can do. The talk was part of the Institute for Computational and Mathematical Engineering’s ongoing AI in Real Life series, in which industry professional share their interactions with the emerging technology.

Dean said AI technologies are revolutionizing human lives, specifically in healthcare. He cited augmented reality microscopes as exemplifying this trend.

“We can take a microscope image, apply a model and highlight the interesting bits,” Dean said.

He also discussed Google’s research on medical problems such as diabetic retinopathy, which is a complication of diabetes that affects the eyes. He showed how Google’s algorithms, using just a smartphone, can detect this disease more accurately than ophthalmologists.

The goal, Dean said, is to provide more sophisticated insights and analyses so that doctors can provide better and more consistent treatment. For example, Dean said one objective of Google’s research is to be able to assess the mortality risk of a given patient at any given time.

Another focus area of Google’s research has been on creating the hardware to run modern AI algorithms with smartphones, allowing those without access to healthcare professionals to run diagnoses locally.

To that point, Dean cited specialized hardware chips designed by Google known as tensor processing units (TPU). High-performance TPUs are used to accelerate the training of AIs, while a smaller TPU variant known as the Edge TPU is meant to be integrated into robots and smartphones.

“If you can build machines for reduced precision linear algebra and nothing else, you’ll be in very good shape for [machine learning],” Dean said.

When asked about industry usage of these chips, he said Google is open to sharing them with researchers who will publicly release their results. He added that the technology will also soon be available for purchase by companies.

Dean concluded his talk with a discussion on the future of AI as a field. The future, he said, lies not in creating lots of models and algorithms for distinct tasks but rather in having one model that can learn everything.

“We have a thousand or a million different tasks we care about,” Dean said. “We shouldn’t be training a thousand or a million different models. We should be training one model so when the million-and-oneth task comes along, we can leverage knowledge learned from previous tasks.”

Audience members praised Dean’s ability to convey nuanced topics while remaining accessible.

“Jeff was able to explain the specifics of really complicated things, like [Google’s] hardware efforts, while still being understandable to the vast majority of people,” said Ian Singer ’22.

Jimmy Le ’22 said he felt Dean’s talk was one of the best of the AI in Real Life series.

“I came out really understanding what he was saying, and I learned a lot about how Google is using AI to really impact our world,” he said.

Contact Mihir Patel at mihirp ‘at’ stanford.edu.